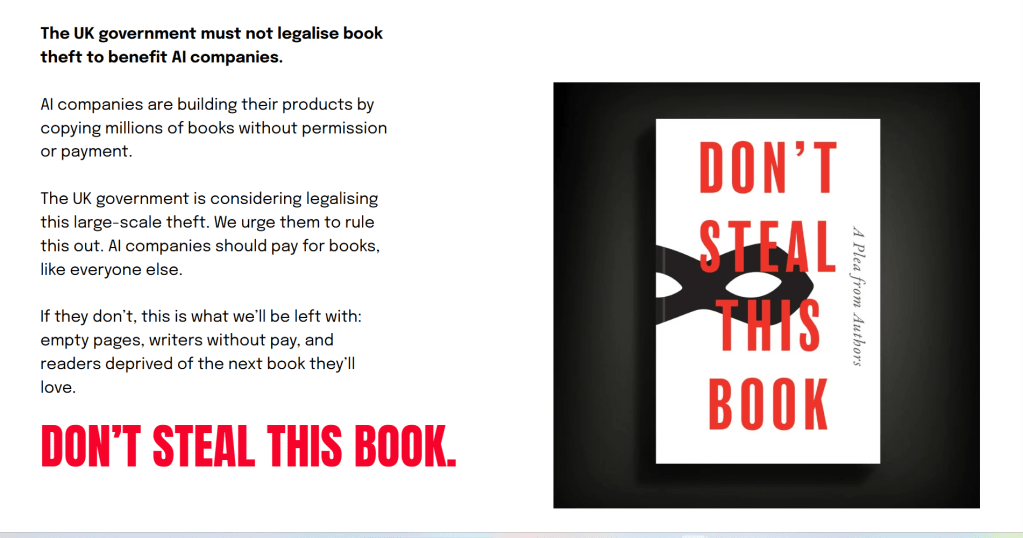

Nearly all creative acts start with the challenge of a blank page. At the 2026 London Book Fair, amidst the usual hum of international rights deals and over-caffeinated networking, a peculiar volume began to circulate, one that contained ten thousand names of authors but not a single sentence of prose. It was called ‘Don’t Steal This Book‘, and its emptiness was the loudest thing in the room. This wasn’t a conceptual art piece or a bureaucratic error; it was a visceral diagnosis of a culture currently being strip-mined for “data” by companies that know the price of everything and the value of nothing. It was the sound of the world’s greatest storytellers, from Kazuo Ishiguro to Malorie Blackman, collectively putting down their pens to ask a single, pragmatic question: if you remove the human from the narrative, what exactly are you left with besides an empty shell?

The digital landscape of the mid-2020s is defined by a profound ontological shift, one that began not with a technological breakthrough, but with a systemic crisis of consent. For the creative professional, it starts with a distinctive, unsettling realisation: that the ground of intellectual property is no longer solid, but is being liquified into data. We are currently witnessing an industrial-scale enclosure of the creative commons, where the lifelong mastery of the illustrator, the songwriter, and the journalist is being treated as raw, uncompensated material for a new informational autocracy.

This is not merely a disruption of market dynamics; it is a systematic deconstruction of the human element in culture. The guiding principle of this era, for those who value the integrity of the individual voice, must be “to my own self be true.” As we navigate the period between 2022 and 2026, we see that the early, visceral protests on platforms like ArtStation were the first tremors of a much larger, more sophisticated resistance. What began as a defensive reflex has evolved into a resilient, good-humoured, yet steely-eyed defence of human agency against an algorithmically-driven void.

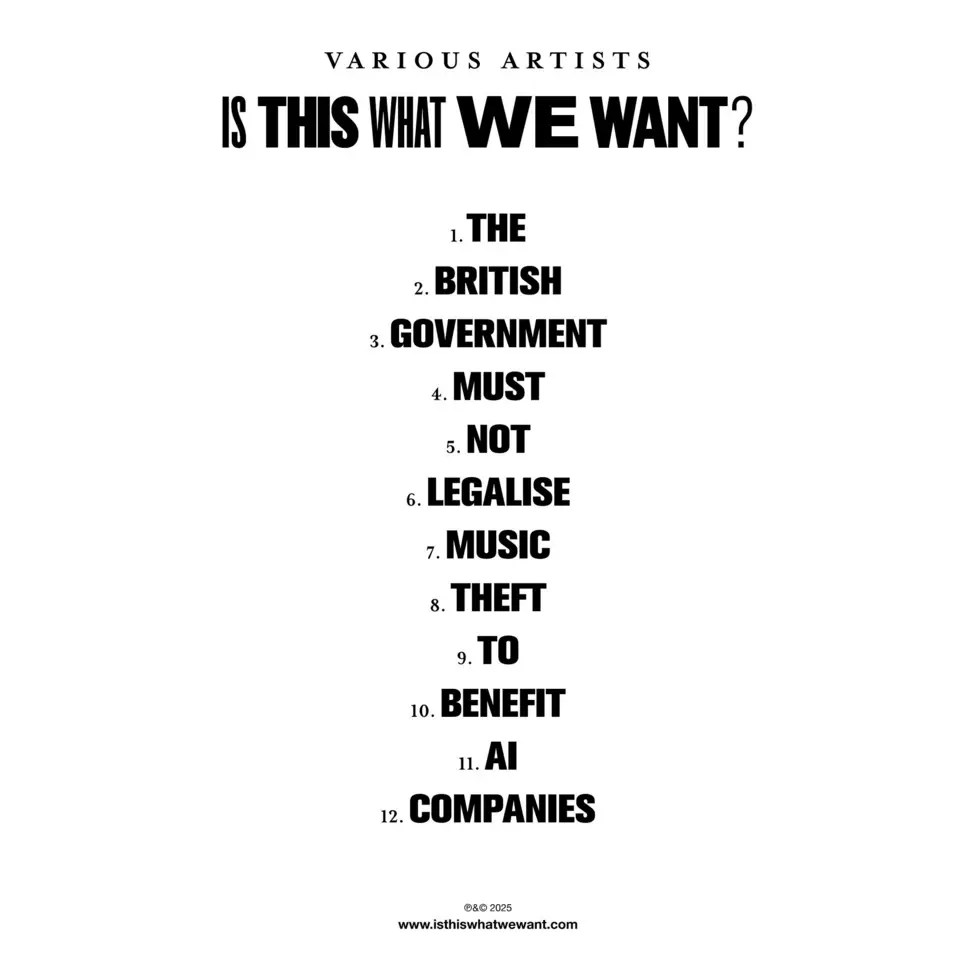

The foundational grievance uniting the visual arts, literature, and music is a triad of missing pillars: consent, credit, and compensation. The current legislative push in the UK and elsewhere for “opt-out” systems amounts to bureaucratic gaslighting. It effectively shifts the burden of policing the global digital commons onto the individual creator, requiring a freelance artist to act as a digital bailiff against a thousand invisible scrapers. To frame this as a “tech-friendly” evolution is to ignore the reality of industrial-scale extraction. When icons such as Kate Bush and Paul McCartney released an album of studio silence in early 2025, they offered a stark, sonic diagnosis of our current trajectory: a culture where the human voice is strategically muted to make way for derivative, synthetic echoes.

This extraction is nowhere more visible than in the current litigation defining the boundary between “fair use” and “systemic piracy.” The legal armour of the tech giants, the claim that generative AI is “transformative” in the same manner as a human student, is suffering from terminal fatigue. Courts are beginning to recognise that downloading seven million pirated books from “shadow libraries,” as seen in the landmark Anthropic settlement, is not an act of learning but an act of predatory acquisition. Cases like Andersen v. Stability AI are shoring up the flood defences by arguing that a model which “embodies” protected works to generate competing derivatives is not a tool of progress, but a mechanism for market displacement.

The existential threat is perhaps most poignant in the realm of identity and “passing off.” One of my singing teachers at the EFDSS has experienced this. British folk singer Emily Portman, in 2025, serves as a definitive case study of the new “dystopian” reality. Finding “AI slop” albums uploaded to her verified profiles, mimicking her vocal cadence and instrumentation, was not a technical error; it was an identity heist. This trend toward “royalty farming” using synthetic replicas highlights the inadequacy of current platform safeguards. When Spotify or Apple Music dismisses these incidents as clerical errors, it reveals a profound disregard for the “Human Premium”—the unique, irreplaceable connection between an artist and their audience that cannot be reduced to a prompt.

Furthermore, we must confront the hollowing out of the Fourth Estate. The phenomenon of “Google Zero”—where search referrals collapse as AI answer engines provide verbatim summaries of investigative reporting—represents a desertification of the epistemic landscape. When John Carreyrou or the New York Times sue for the “deliberate theft” of their archives, they are fighting for the survival of primary-source truth. AI can aggregate data with chilling efficiency, but it lacks the moral courage to interview a whistleblower or the legal skin in the game to hold power to account. A society that trades investigative depth for the convenience of an automated summary has lost its grip on reality. The zone is truly flooded with shit, creating a systemic failure of trust.

However, an insightful analysis must also acknowledge the messier, hidden realities of this transition. Beneath the heroic narrative of resistance lies a “tragedy of the commons.” Econometric studies from 2025 show a significant “digital withdrawal,” with artists cutting their public output by over 30% to avoid being harvested. This talent drain creates a vacuum increasingly filled by “beige” content, a collective flattening of imagination where work clusters around the statistical middle of the training data. There is also the quiet, pragmatic hypocrisy of survival: many creators privately utilise AI as a “co-pilot” for efficiency while publicly maintaining an anti-AI stance. This is not a moral failure, but a symptom of a system that demands infinite content while devaluing the time it takes to create it.

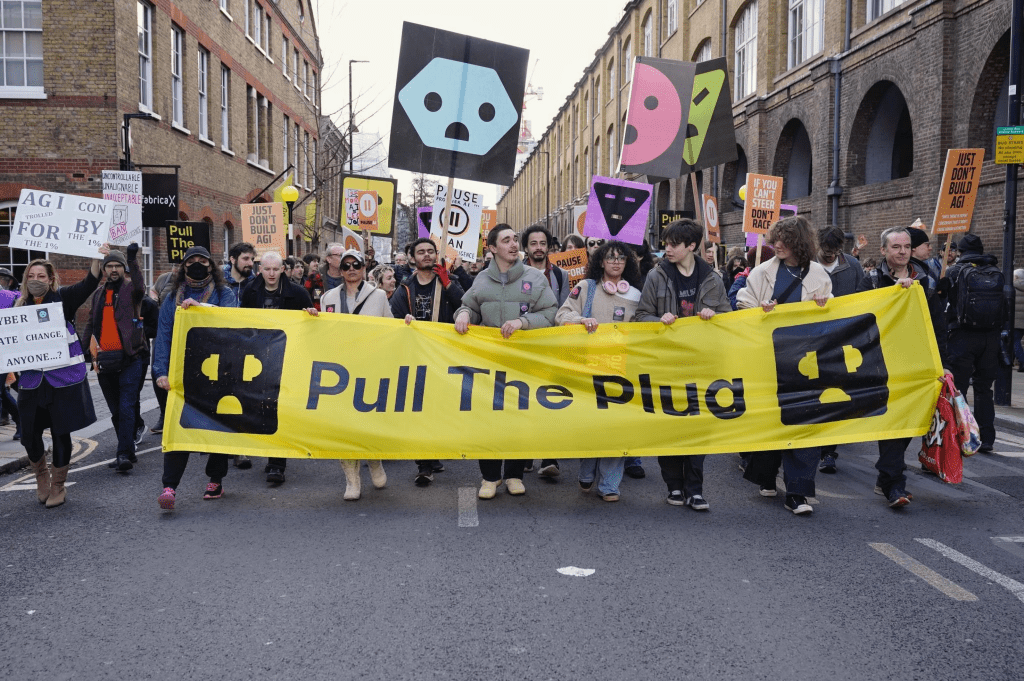

Despite this, a resilient optimism is emerging through grassroots activism. The “March Against the Machines” in London in February 2026, spearheaded by groups like Pull The Plug and PauseAI, marked the UK’s largest street-level rejection of unregulated AI rollout. These movements, alongside the “Luddite Renaissance” in the US, represent a shift from digital withdrawal to civic engagement. They are demanding democratic oversight and “Real People in Real Time” interactions, recognising that “AI slop” is a choice, not an inevitability. We are building a new humanism, one that acknowledges the difficulty of the situation but refuses to be hopeless.

The cage is being rattled, and for the first time, the momentum is shifting toward a human-authored future. We are moving beyond the internal shift in media curation to tangible, external civic action. The path forward is not found in a retreat to the past, but in a pragmatic demand for a future that treats human creativity as a premium asset, not a raw resource.

The following call to action is therefore not a suggestion, but a civic necessity for the preservation of our cultural integrity:

First, we must reclaim our agency as patrons of the authentic. If you value a creative voice, you must support it directly; subscriptions and physical acquisitions are the primary armour against the “Google Zero” referral collapse. Second, implement a rigorous personal policy of “informational hygiene.” Utilise forensic tools like Glaze or Nightshade to protect your digital output and demand absolute transparency from the platforms you inhabit.

Finally, escalate your involvement from individual awareness to collective participation. Join the most vital, and perhaps most boring-sounding, professional guilds and unions available to you—be it the Musicians’ Union, the Society of Authors, or the National Union of Journalists. It is in these structured, democratic bodies that we will forge the statutory compensation models and “opt-in” licensing frameworks required to shore up the flood defences. Write to your MP; demand that the UK government prioritises the human creative economy over the convenience of unregulated extraction. We are the authors of this century. Let us ensure the pen remains in our hands. Let’s get to work.

Postscript

As momentum behind meaningful legislation on AI in the UK has appeared to stall, new research from the Ada Lovelace Institute shows that this delay – and the government’s broader shift away from regulation – is increasingly out of step with public attitudes.

The nationally representative polling examines not only whether the UK public support regulation of AI, but also how they expect it to function, and where gaps between public expectations and policy ambition may lie. Key findings include:

- The public support independent regulation. The UK public do not trust private companies to self-regulate. There is strong public support (89%) for an independent regulator for AI, equipped with enforcement powers.

- The public prioritise fairness, positive social impacts and safety. AI is firmly embedded in public consciousness and 91% of the public feel it is important that AI systems are developed and used in ways that treat people fairly. They want this to be prioritised over economic gains, speed of innovation and international competition when presented with trade-offs.

- The public feel disenfranchised and excluded from AI decision-making, and mistrust key institutions. Many people feel excluded from government decision-making. 84% fear that, when regulating AI, the government will prioritise its partnerships with large technology companies over the public interest.

- The public expect ongoing monitoring and clear lines of accountability. People support mechanisms such as independent standards, transparency reporting and top-down accountability to ensure effective monitoring of AI systems, both before and after they are deployed.

Nuala Polo, UK Public Policy Lead at the Ada Lovelace Institute, said:

“Our research is clear: there is a major misalignment between what the UK public want and what the government is offering in terms of AI regulation. The government is betting big on AI, but success requires public trust. When people do not trust that government policy will protect them, they are less likely to adopt new technologies, and more likely to lose confidence in public institutions and services, including the government itself.”

Michael Birtwistle, Associate Director at the Ada Lovelace Institute, said:

“Examples of the unmanaged risks – and sometimes fatal harms – of AI systems are increasingly making the headlines. Trust is built with meaningful incentives to manage harm. We see these incentives in food, aviation and medicines – consequential technologies like AI should not be treated any differently. Continued inaction on AI harms will come with serious costs to the potential benefits of adoption.”

Selected References and Further Reading

Legal Case Law and Settlements

- Andersen v. Stability AI Ltd., No. 3:23-cv-00201 (N.D. Cal. 2024). Link to Court Listener

- Bartz v. Anthropic PBC, No. 3:24-cv-05458 (N.D. Cal. 2025). Regarding the $1.5 billion settlement for pirated datasets.

- The New York Times Co. v. Microsoft Corp. & OpenAI, No. 1:23-cv-11195 (S.D.N.Y. 2023). NYT Case Overview

- Justice v. Suno, Inc. & Woulard v. Udio (2025). Independent musicians challenging unlicensed sound recording training.

Policy and Legislation

- Tennessee ELVIS Act (Ensuring Likeness Voice and Image Security Act), 2024. TN.gov Announcement

- European Union AI Act, Regulation (EU) 2024/1689. Official EU Text

- UK Intellectual Property Office (IPO): Consultations on Text and Data Mining (TDM) and AI Copyright. UK IPO Portal

- Policy research https://www.adalovelaceinstitute.org/

Artist Advocacy and Reports

- The Musicians’ Union & Society of Authors: Joint Statement on AI and the “Make It Fair” Campaign (2025). Musicians’ Union Website

- UNESCO Report: “The Impact of Generative AI on the Creative Economy” (2025/2026 Forecast). UNESCO Culture Sector

- Reuters Institute Digital News Report 2025/2026: Analysis of “Google Zero” and search referral decline. Reuters Institute

Activist Groups and Technological Defences

- Pull The Plug: Grassroots UK activists and “March Against the Machines” organisers. https://pulltheplug.uk/ Pull The Plug Instagram

- Don’t Steal This Book – An empty book from almost 10,000 authors, protesting the theft of books by AI companies to train AI models. https://www.dontstealthisbook.com/

- PauseAI: International movement for the temporary suspension of frontier AI training. PauseAI Official Site

- Glaze & Nightshade Project: University of Chicago tools for protecting artists against style mimicry. Glaze Project

Media Coverage of Key Incidents

- BBC News: “Folk singer Emily Portman on AI identity fraud” (2025). BBC Article

- The Guardian: “Don’t Steal This Book: Authors protest AI at London Book Fair” (2026).

- Blood in the Machine: Coverage of the “Luddite Renaissance” and youth-led tech refusal. Blood in the Machine

Like many writers in 2026, I use AI tools as part of my process, in this case Grok, Perplexity, Grammarly and Gemini, to help with research, brainstorming, exploration, and generating initial drafts and organising complex thoughts. However, this blog is not AI-generated content.

Every post is the result of my own research, lived experience, and extensive rewriting.

The final arguments, metaphors, and conclusions are mine alone. I disclose this because I believe the fight for human creativity begins with honesty about how we create.