Ever had that strange feeling? You mention needing a new garden fork in a message, and for the next week, every corner of the internet is suddenly waving one in your face. It’s a small thing, a bit of a joke, but it’s a sign of something much bigger, a sign that the digital world—a place of incredible creativity and connection—doesn’t quite feel like your own anymore.

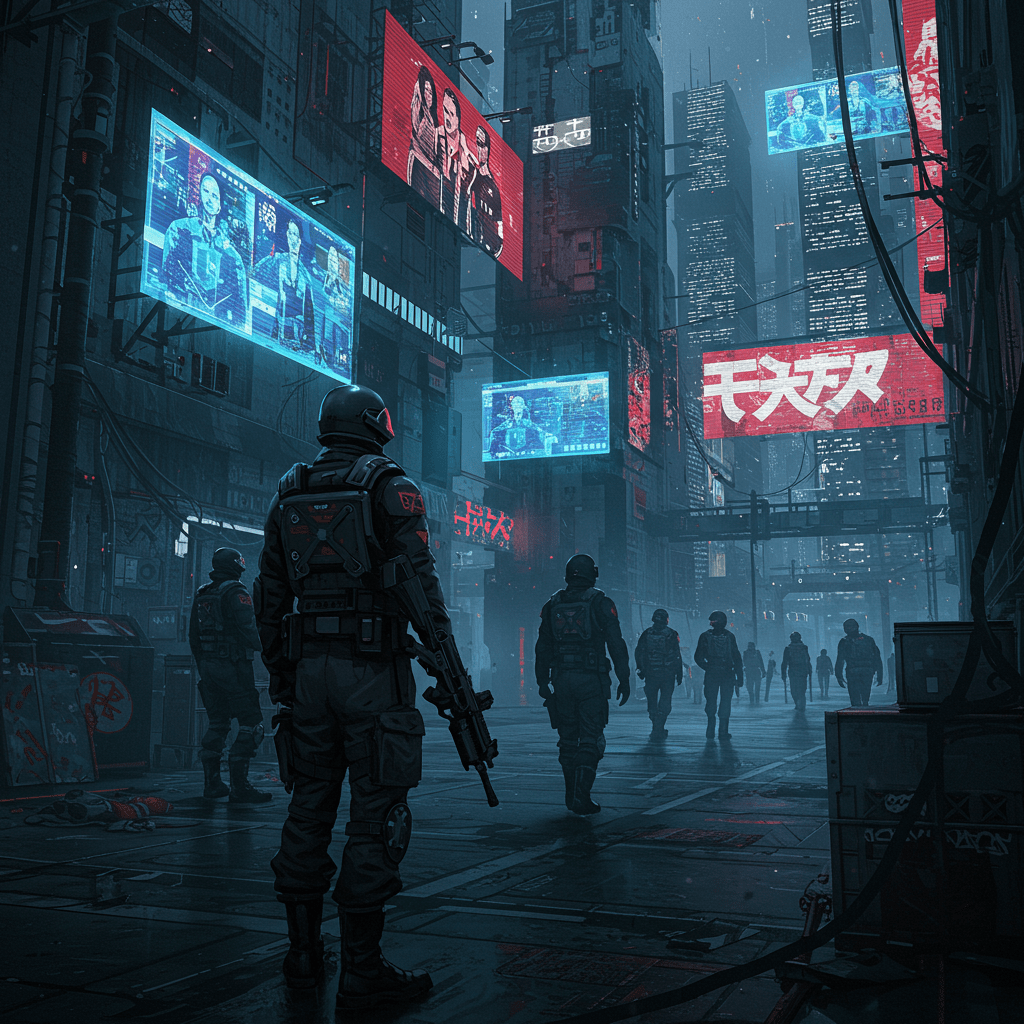

The truth is, and let’s be authentic about it, we’ve struck a strange bargain. We’re not really the customers of these huge tech companies; in a funny sort of way, we’re the product. We leave a trail of digital breadcrumbs with every click and share, not realising they’re being gathered for someone else’s feast. Our digital lives are being used to train algorithms that are learning to anticipate our every move. It’s all a bit like we’re living in a house with glass walls, and we’ve forgotten who’s looking in or why. We’ve drifted into a new kind of system, a techno-feudalism, where a handful of companies own the infrastructure, write the rules we blithely agree to, and profit from the very essence of us.

This isn’t some far-off problem; it’s happening right here on our doorstep. Take Palantir, a US spy-tech firm now managing a massive platform of our NHS patient data. They’re also working with UK police forces, using their tech to build surveillance networks that can track everything from our movements to our political views. Even local councils are getting in on the act, with Coventry reviewing a half-a-million-pound deal with the firm after people, quite rightly, got worried. This is our data, our health records, our lives.

When you see how engineered the whole system is, you can’t help but ask: why aren’t we doing more to protect ourselves? Why do we have more rights down at the DVLA than we do online? Here in the UK, we have laws like the GDPR and the new Data (Use and Access) Act 2025, which sound good on paper. But in practice, they’re riddled with loopholes, and recent changes have actually made it easier for our data to be used without clear consent. Meanwhile, data brokers are trading our information with little oversight, creating risks that the government itself has acknowledged are a threat to our privacy and security.

It feels less like a mistake and more like the intended design.

This isn’t just about annoying ads. Algorithms are making life-changing decisions. In some English councils, AI tools have been found to downplay women’s health issues, baking gender bias right into social care. Imagine your own mother or sister’s health concerns being dismissed not by a doctor, but by a dispassionate algorithm that was never taught to listen properly. Amnesty International revealed last year how nearly three-quarters of our police forces are using “predictive” tech that is “supercharging racism” by targeting people based on biased postcode data. At the same time, police are rolling out more live facial recognition vans, treating everyone on the street like a potential suspect—a practice we know discriminates against people of colour. Even Sainsbury’s is testing it to stop shoplifters. This isn’t the kind, fair, and empathetic society we want to be building.

So, when things feel this big and overwhelming, it’s easy to feel a bit lost. But this is where we need to find that bit of steely grit. This is where we say, “Right, what’s next?”

If awareness isn’t enough, what’s the one thing that could genuinely change the game? It’s a Digital Bill of Rights. Think of it not as some dry legal document, but as a firewall for our humanity. A clear, binding set of principles that puts people before profit.

So, if we were to sit down together and draft this charter, what would be our non-negotiables? What would we demand? It might look something like this:

- The right to digital privacy. The right to exist online without being constantly tracked and profiled without our clear, ongoing, and revocable consent. Period.

- The right to human judgment. If a machine makes a significant decision about you – such as your job or loan – you should always have the right to have a human review it. AI does not get the final say.

- A ban on predictive policing. No more criminalising people based on their postcode or the colour of their skin. That’s not justice; it’s algorithmic segregation.

- The right to anonymity and encryption. The freedom to be online without being unmasked. Encryption isn’t shady; in this world, it’s about survival.

- The right to control and delete our data. To be able to see what’s held on us and get rid of it completely. No hidden menus, no 30-day waiting periods. Just gone.

- Transparency for AI. If an algorithm is being used on you, its logic and the data it was trained on should be open to scrutiny. No more black boxes affecting our lives.

And we need to go further, making sure these rights protect everyone, especially those most often targeted. That means mandatory, public audits for bias in every major AI system. A ban on biometric surveillance in our public spaces. And the right for our communities to have a say in how their culture and data are used.

Once this becomes law, everything changes. Consent becomes real. Transparency becomes the norm. Power shifts.

Honestly, you can’t private-browse your way out of this. You can’t just tweak your settings and hope for the best. The only way forward is together. A Digital Bill of Rights isn’t just a policy document; it’s a collective statement. It’s a creative, hopeful project we can all be a part of. It’s us saying, with one voice: you don’t own us, and you don’t get to decide what our future looks like.

This is so much bigger than privacy. It’s about our sovereignty as human beings. The tech platforms have kept us isolated on purpose, distracted and fragmented. But when we stand together and demand consent, transparency, and the simple power to say no, that’s the moment everything shifts. That’s how real change begins – not with permission, but with a shared sense of purpose and a bit of good-humoured, resilient pressure. They built this techno-nightmare thinking no one would ever organise against it. Let’s show them they were wrong.

The time is now. With every new development, the window for action gets a little smaller. Let’s demand a Citizen’s Bill of Digital Rights and Protections from our MPs and support groups like Amnesty, Liberty, and the Open Rights Group. Let’s build a digital world that reflects the best of us: one that is creative, kind, and truly free.

Say no to digital IDs here https://petition.parliament.uk/petitions/730194

Sources

- Patient privacy fears as US spy tech firm Palantir wins £330m NHS …

- UK police forces dodge questions on Palantir – Good Law Project

- Coventry City Council contract with AI firm Palantir under review – BBC

- Data (Use and Access) Act 2025: data protection and privacy changes

- UK Data (Access and Use) Act 2025: Key Changes Seek to …

- Online tracking | ICO

- protection compliance in the direct marketing data broking sector

- Data brokers and national security – GOV.UK

- Online advertising and eating disorders – Beat

- Investment in British AI companies hits record levels as Tech Sec …

- The Data Use and Access Act 2025: what this means for employers …

- AI tools used by English councils downplay women’s health issues …

- Automated Racism Report – Amnesty International UK – 2025

- Automated Racism – Amnesty International UK

- UK use of predictive policing is racist and should be banned, says …

- Government announced unprecedented facial recognition expansion

- Government expands police use of live facial recognition vans – BBC

- Sainsbury’s tests facial recognition technology in effort to tackle …

- ICO Publishes Report on Compliance in Direct Marketing Data …

- Data brokers and national security – GOV.UK

- International AI Safety Report 2025 – GOV.UK

- Revealed: bias found in AI system used to detect UK benefits fraud

- UK: Police forces ‘supercharging racism’ with crime predicting tech

- AI tools risk downplaying women’s health needs in social care – LSE

- AI and the Far-Right Riots in the UK – LSE

- Unprecedented Expansion of Facial Recognition Is “Worrying for …

- The ethics behind facial recognition vans and policing – The Week

- Sainsbury’s to trial facial recognition to catch shoplifters – BBC

- No Palantir in the NHS and Corporate Watch Reveal the Real Story

- UK Data Reform 2025: What the DUAA Means for Compliance

- Advancing Digital Rights in 2025: Trends – Oxford Martin School

- Declaration on Digital Rights and Principles – Support study 2025

- Advancing Digital Rights in 2025: Trends, Challenges and … – Demos

Like many writers in 2026, I use AI tools as part of my process, in this case Grok, Perplexity, Grammarly and Gemini, to help with research, brainstorming, exploration, and generating initial drafts and organising complex thoughts. However, this blog is not AI-generated content.

Every post is the result of my own research, lived experience, and extensive rewriting.

The final arguments, metaphors, and conclusions are mine alone. I disclose this because I believe the fight for human creativity begins with honesty about how we create.

Like many writers in 2026, I use AI tools as part of my process, in this case Grok, Perplexity, Grammarly and Gemini, to help with research, brainstorming, exploration, and generating initial drafts and organising complex thoughts. However, this blog is not AI-generated content.

Every post is the result of my own research, lived experience, and extensive rewriting.

The final arguments, metaphors, and conclusions are mine alone. I disclose this because I believe the fight for human creativity begins with honesty about how we create.